Creating 3D digital doubles

“We've even managed to 3D-scan people successfully doing handstands, jumps and even a backflip! “

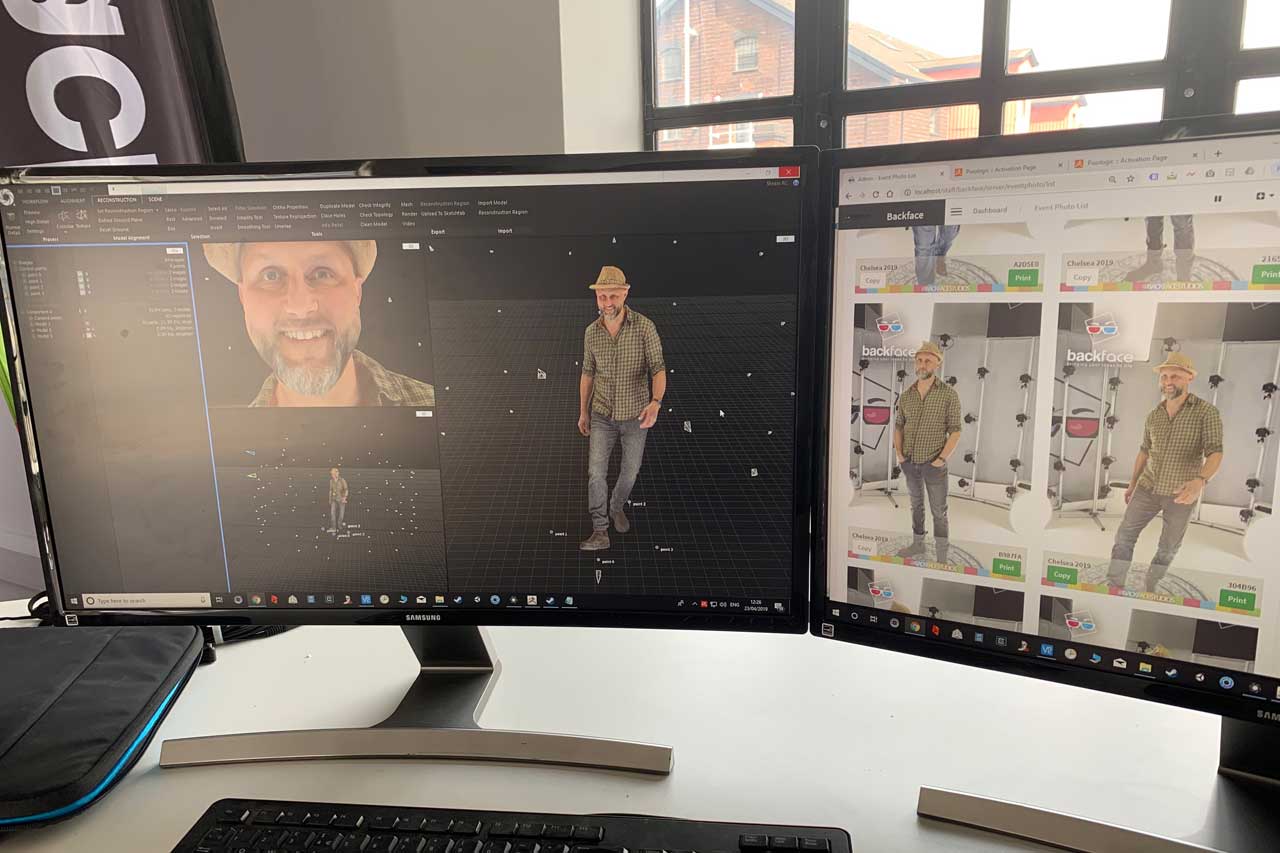

How to get an ultra-realistic 3D asset of a person quickly and with a high level of detail? Photogrammetry with the combination of a full-body scanner is a technology used in the movie industry, video games, VR production and many more.

The knowledge behind full-body scanning is sharing with us Mr Tim Milward, a director of Backface.

For the people that are completely new to this topic, could you please explain how does the full-body scanner work?

“The scanner uses photogrammetry to work. But instead of using one camera to take lots of photos, we use 96 cameras positioned at various angles all taking one photo each at the same time. The resulting photos can then be used with RealityCapture to produce a 3D coloured mesh.”

If somebody wanted to build a professional full-body scan, what would he/she need?

For our scanner, we use 96 DSLR cameras all fitted with prime lenses of varying focal lengths. We've rigged equipment to power the cameras via cables (we wouldn't want to charge 96 batteries every night!). In our scanning rig, we have 10 PCs with around 10 cameras connected to each PC. These PCs are networked up to a master PC which is used to control the cameras using an API.

As each scan is typically around 2.5GB of data, the master PC uses scripts that we've written to quickly move and backup the scan photos from the cameras, via the rig PCs back to a storage server. We like to use flash photography, which allows us to have a larger depth of field than we could otherwise achieve with bright continuous lighting - so the rig has 6 diffuse flashes. Each camera is connected via a relay system enabling them all to fire using their trigger port at the same time using a small handheld remote. “

When the full-body scan rig is built, what everything is possible to create with this type of scanner?

“Our custom-built full-body scanner captures the data for a 3D scan in 1/60th of a second, enabling us to 3D-scan a person without them needing to hold still. We've even managed to 3D-scan people successfully doing handstands, jumps and even a backflip!

The resulting 3D model produced can be used for 3D printing (e.g. an actor’s head 3D-printed for a film), or the model can be used without printing to be used as a video game character. We also 3D-print in colour complete figures around 20cm tall, which can be used as the bride and groom on a wedding cake.”

Can you reveal something from your workflow?

“Typically, at the start of the day, we take 1 good scan of a person wearing clothing that scans well. Using RealityCapture, we run an alignment on this scan, set some ground control points so the scan is to scale and export the XMP metadata, the reconstruction region, and control points to use with scans later in the day.

Then, with the RealityCapture CLI license, we do the following:

- Align the cameras using the XMP files from the morning scan

- Import the flight log from the morning scan and control points

- Set the reconstruction region

- Reconstruct the scan using normal detail

- Crop the model to fit a newly imported reconstruction region

- Select and delete large triangles

- Select the largest component of the scan (hopefully the person!) and delete the rest

- Clean the model

- Simplify the model

- Texture the model

- Export the model as an OBJ with texture“

Why have you decided for RealityCapture to be the photogrammetry software you use?

“The speed at which RealityCapture works is a huge advantage. We've also found its hole filling is more accurate than competitors. The ability to use manual control points to bring together many components is invaluable and, of course, without the CLI, we wouldn't be able to process the huge number of scans from an event without many hours of manual processing.”

What is the trickiest part of full-body scanning? Is there something particular you have to pay attention to?

“Clothing makes a big difference in how good a scan of a person will come out. Patterns, textures and creases really help get a good scan as it enables RealityCapture's algorithms to find many unique points to match.

The pose also plays a factor in how well the scan will come out. If the pose is too wide, then parts of the body might not fit in many of the photos. It's also possible that in some poses like kneeling or sitting, some parts of the body can obscure other parts, blocking the view of the cameras.”

This year you were a part of The Big Bang Fair, the Birmingham science fair for children. What were you presenting there?

“We'd been invited to attend to inspire young people in some interesting career paths that they could follow in the fields of science, technology, engineering and math. The visitors got to have a go in our scanner and were all emailed after the show with a link to view their 3D scan online.

We were able to explain how the technology works and how they'd be able to replicate it at home with just one camera. The event was really successful and the scanning extremely well received.”

Backface is a company based in Birmingham, UK, that specializes in human 3D scanning and 3D printing. See more of their work on Sketchfab.